A 3D gameplay exploration where players explore fantasy environments, interact with AI-driven NPCs using live voice input, and complete dynamic, LLM-managed quests.

You can download the Windows build to test full voice input capabilities (the web build is restricted to text only).

Key Contributions

LLM-Powered NPC Interaction · AI Integration · Real-Time Dialogue

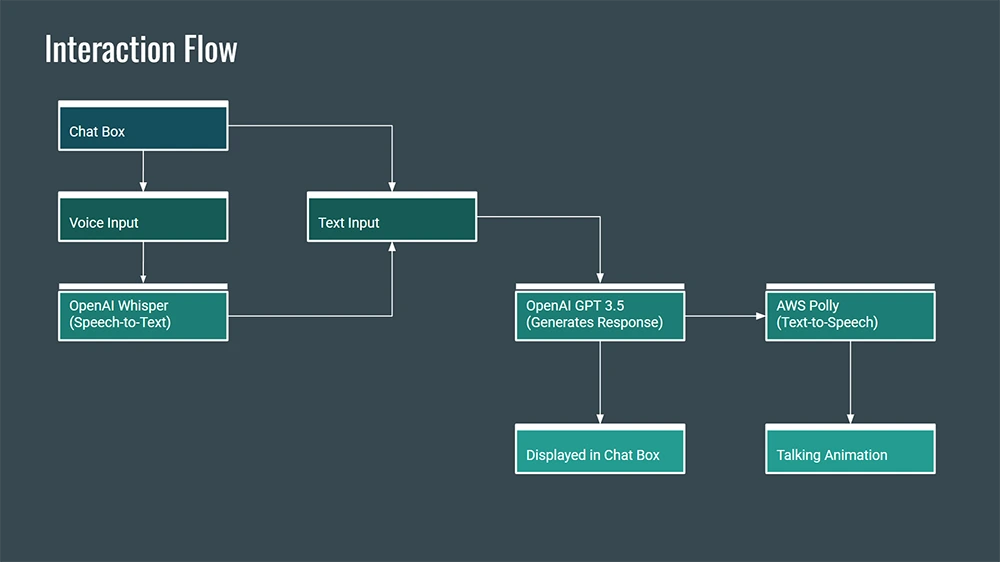

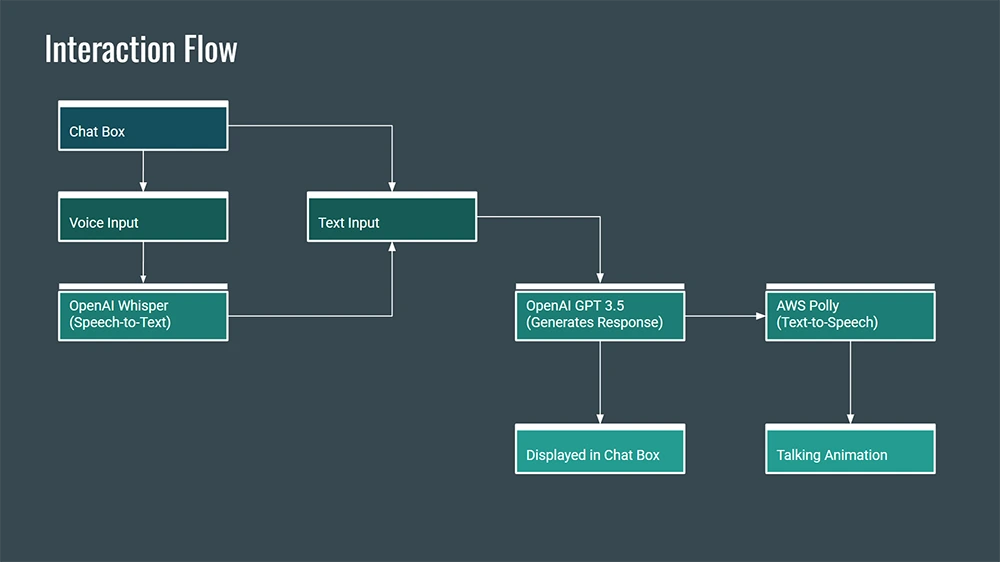

- Integrated OpenAI Whisper, GPT, and AWS Polly into Unity to create intelligent characters capable of speech recognition, real-time contextual dialogue, and voice synthesis.

- Architected a complete interaction pipeline that transcribes player input, feeds it to an LLM logic layer, and synthesizes behavioral and verbal responses.

- Enabled NPC-driven mini-quests by actively injecting real-time gameplay context into the LLM logic prompts.

Proof-of-Concept Development · Systems Design · Rapid Iteration

- Developed the entire proof-of-concept solo, acting as the sole engineer and technical designer.

- Validated AI-driven interactions inside a real mechanical gameplay loop rather than a sterile chat window.

- Prioritized extensibility and clean system boundaries to prove the architecture could scale to a full game.

NPC Architecture · Data-Driven Design · Reusability

- Built ScriptableObject-based NPC personas that cleanly define names, backstories, and personality traits.

- Configured personality traits as reusable data assets rather than tying them to specific instance prefabs.

- Engineered a single modular NPC prefab capable of handling multiple variants (patrolling, quest-giving, stationary).

- Decoupled NPC identity logic from execution behavior to ensure scalable asset management.

Interface-Driven Gameplay Contracts · Decoupling · Extensibility

- Defined strict interaction and quest contracts utilizing C# interfaces.

- Enabled objects, NPCs, and AI components to collaborate silently without holding concrete dependencies to one another.

IQuest

- Supported discrete, unique logic per quest while exposing an intentionally generic execution layer.

- Allowed LLM-powered NPCs to seamlessly drive quest progression states purely from abstract natural language inputs.

public interface IQuest

{

bool IsActive { get; }

void OnQuestStart();

void OnQuestEnd();

void OnPlayerResponse(string response);

}

IInteractable

Standardized activation behavior uniformly across all interactive scene entities (NPCs, chests, doors).

public interface IInteractable

{

void Interact();

}

Abstract Base Systems · Shared Behavior · Controlled Inheritance

- Utilized abstract base classes strategically to lock down shared state logic while enabling specific implementations.

- Prevented code duplication naturally, ensuring complex logic wasn't repeated across similar interactions.

Abstract Door System

View Code Snippet

public abstract class DoorBehaviour : MonoBehaviour

{

private Animator animator;

private int animationHash;

private bool isOpen;

public event Action DoorStateChanged;

protected void OpenDoor()

{

if (isOpen) return;

if (animator) animator.SetBool(animationHash, true);

DoorStateChanged?.Invoke();

isOpen = true;

}

private void OnTriggerExit(Collider other)

{

if (!isOpen) return;

if (animator) animator.SetBool(animationHash, false);

DoorStateChanged?.Invoke();

isOpen = false;

}

}

- Centralized the required animation syncing and operational state rules.

- Enabled concrete implementation classes (e.g.,

InteractableDoor, AutomaticDoor) to simply focus on what triggers them.

- Emitted safe, event-driven state changes, allowing external managers to plug in sounds or logic securely.